Giving teachers the right information, at the right moment

Class Insights, made simple

Problem:

Teachers have 45 minutes to manage a full classroom: students who are behind, students who are ahead, and everyone in between. The data to support them exists, but it lives inside dashboards nobody has time to open before the class ends.

Hypothesis:

By surfacing class intelligence through a conversational AI assistant, embedded directly in the teacher's daily workflow, we can reduce the time-to-insight to near-zero and make the platform's data feel useful instead of administrative.

Identifying Jobs-to-be-done

Mapping the problem and current experience

Start the day with clarity: know immediately who needs attention, without opening a dashboard

Identify students ready for more: surface who's finished and ready for a challenge

Get context, not just data: receive a recommendation, not a raw number

Designing for the moment, not the feature

The first step was deceptively simple: don't make teachers think about how to use AI.

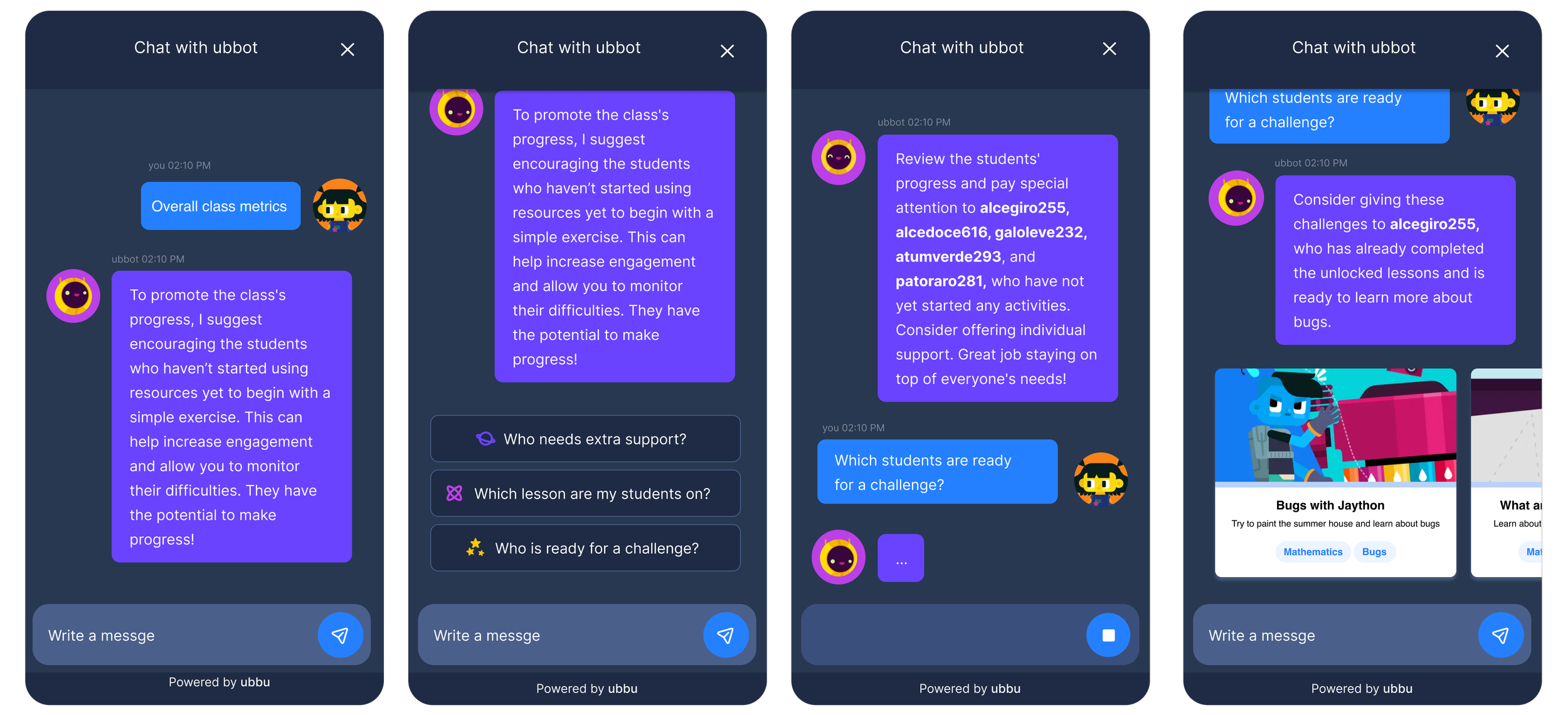

Instead of an open chat field, teachers are greeted with a set of pre-built prompts: "Who needs extra support?", "Who is ready for a challenge?", "Which lesson are my students on?" mapped to the exact questions they're already asking in their head at the start of every class.

ubbot answers in plain language. Not "5 students haven't started" but "Take a look at alcegiro255, alcedoce616, galoleve232, atumverde293, and patoraro281 they haven't started yet. A quick check-in might help."

On the AI models

I advocated for a closed conversational model in v1. Open-ended chat increases hallucination risk and can erode teacher trust.

Starting constrained, with responses grounded in real student data, meant we could ship something teachers could actually rely on. The product can always grow. Trust, once broken mid-class, is nearly impossible to rebuild. Constrained scope in v1 was a deliberate risk decision, not a design limitation.

Use this Delegation and Diligence Loop we can build a v2 with confidence and move forward with the project confidently.

Back to the drawing board

or our first learning metric

ubbot tells teachers who's behind. But it can't tell them why.

We kept coming back to the same question: is a student stuck because they haven't started, or because they started and didn't understand? Progress data alone can't answer that. We need a different kind of signal: and we didn't want to design another test.

Problem: We had no reliable way to distinguish students who were behind from students who hadn't comprehended. Without that, any support the teacher offered was a guess.

Assumption: A lightweight knowledge check framed not as a test but as an affirmation of what students already know, would give us our most important data point yet: a real learning metric, not just an engagement proxy.

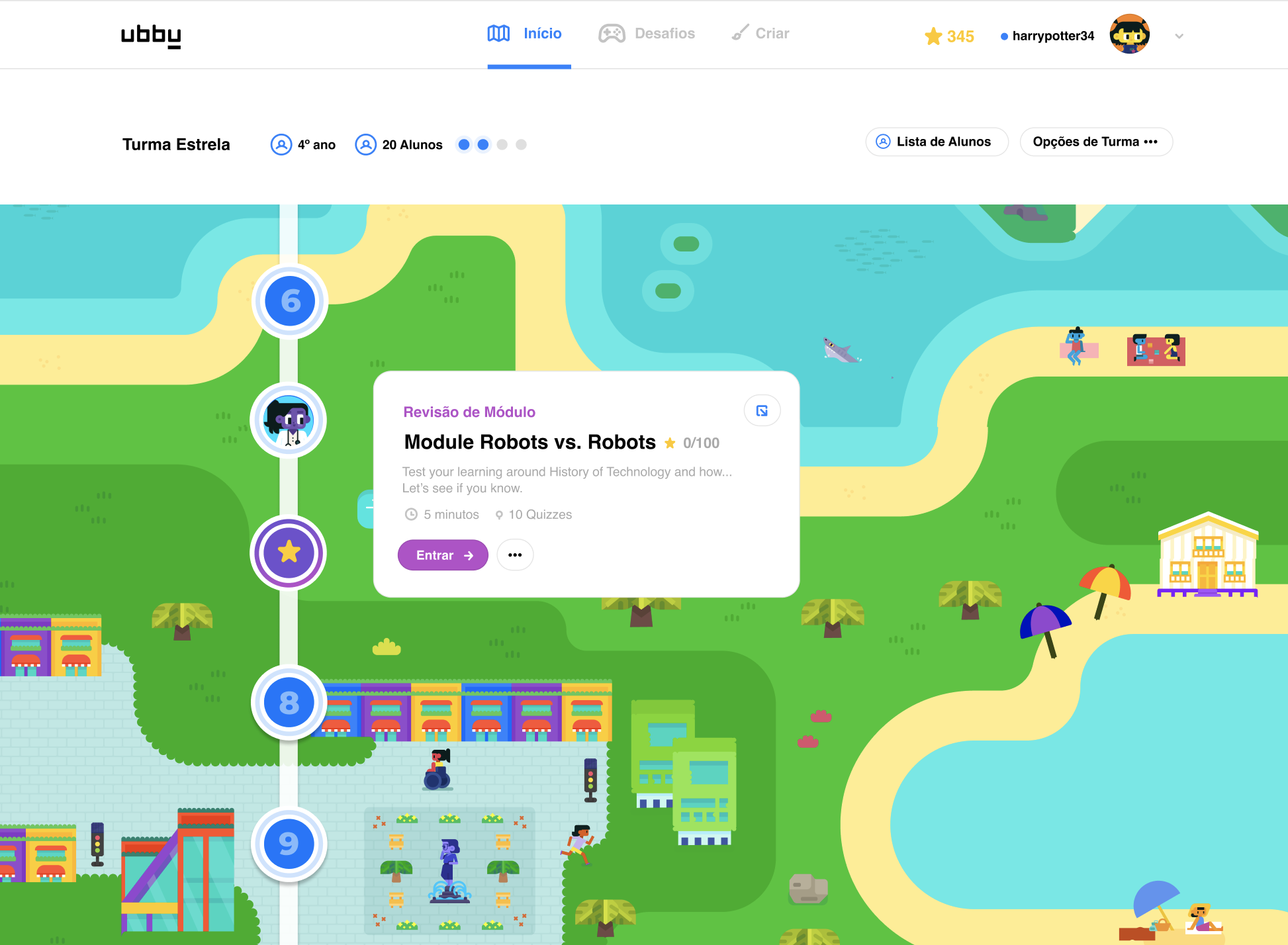

The result is Module Review. At the end of each module, students complete a short multiple-choice quiz that appears automatically at the right moment: no setup, no friction. It's quick to complete and designed to feel like a natural step in the journey, not an evaluation.

For teachers, it closes the loop that ubbot opens. Soon, individual results will be visible in the dashboard: giving them a fast, reliable way to see who's ready to move forward and who might need a different kind of support.

This is our first true learning metric. Not lessons started. Not resources played. Whether the concept actually landed.

“We believe that every child has their own spark and learns best in a way that reflects their individuality. AI-powered tools in our educational platforms are fundamental to unlocking personalized educational experiences that not only engage students but also equip teachers with the insights and resources to support each student’s unique journey.”

- Mariana Penido, Product Director at Arco